The Case for College

On March 13, 2024 by Jonathan ZdziarskiAs long as a branch of science offers an abundance of problems, so long it is alive; a lack of problems foreshadows extinction or the cessation of independent development.

David Hilbert, 1900

As a self-educated professional working with the best in the field, I think I’m supposed to tell you that you don’t need college to be successful. My journey has been an unconventional one for sure. Growing up in a dysfunctional home with a schizoaffective and abusive father, surviving high school alone was barely manageable. The notion of college was unconscionable to a depressed teenager from a poor home with no parental guidance or support. Computers have been a part of my life since I was eight, where typing programs from the back of magazines into a Radio Shack TRS-80 took me places far away from my terrifying childhood. The highest level of education I’ve accomplished to date is a GED, after failing out of high school. What turned my trajectory around, second only to my faith, was falling in love with learning. I’ve learned a lot over the course of an ongoing 30-year career, and slowly worked my way up from building PCs and doing sysadmin work into software engineering, forensics, and security. With that has come the opportunity to make a lot of impact along the way that’s touched people’s lives, and a lot of self-education. This is a life I couldn’t have possibly imagined for myself. A great career with one of the best companies in the world, books written, a good living, and the opportunities to make long lasting impact. So why would you need college to do the same, especially when billionaires like Peter Thiel are willing to pay you six figures to drop out?

Thiel’s plan for you is a short-sighted one, and doesn’t take into account the difficulty you’re likely to face as a result of taking his offer. What’s missing from Thiel’s story – and all of his romanticized notions- is all the hard from taking this path. Not just the financial hard that it takes, but the hard of navigating an unforgiving world without a degree – regardless of your intelligence. The hard in trying to make meaningful contributions to the scientific community and touch government sectors without a formal education. The difficulty of the mind in grasping for solutions to complex problems but lacking the theoretical foundation to connect with your higher-level knowledge, and the sense of feeling stupid for decades because of it. The hard in having to constantly prove you’re a better choice than the other candidate with a pedigree, no matter what level of experience you have in your field. Sure, you’re not me – I get that, but perhaps consider some of my experience spanning a tech career before you decide to quit school.

This Didn’t Have to Happen

On October 28, 2023 by Jonathan ZdziarskiThe tragic mass shooting in Lewiston, Maine took me back to reflecting on our family’s struggles with my dad’s mental health. It’s been some eleven years since I wrote about his mental illness, and how the system failed all of us. Robert Card, the subject in the recent mass shooting, bore many eerie and disturbing similarities to what I’d written many years ago about my dad. Not much seems to have changed in terms of the state of mental health care since then. Card’s symptoms were classifiable, diagnosable, and not unique.

My father died in May 2020 in a psychiatric institution while everything was still locked down during COVID. We weren’t allowed to see him in person before he died, but I’d seen him several times prior to that, where my older children got to spend some time with him, and he had been able to meet his youngest granddaughter. Prior to his full commitment, he was diagnosed with schizoaffective disorder, which is a form of schizophrenia with a component of a mood disorder. This presented through his life, and in the cruelty I experienced through my childhood, with many similar and disturbing parallels to what I’d read about Robert Card from his family. The hearing voices, the paranoia, the truly believing that others wanted to harm him in his own terrifying world was an understatement. He lived terrified and angry. He heard strangers whispering his name in public. He believed in an evil consuming people to conspire against him.

My mom was the only thing that kept him somewhat grounded through his psychosis during my childhood, and went through much the same as Card’s family trying to convince him that others weren’t talking about him or conspiring against him. In my late teens, they divorced for the sake of everyone involved, and he secluded himself; much like the reports of Card’s breakup with his girlfriend, this destabilization seemed to have sparked or accelerated a downward spiral. My dad became terrified that he was constantly being pursued by evil people, scanning his brain or trying to steal his body parts. He believed his doctors were trying to poison him. He had suffered from long-term ulcerations in his legs from arterial insufficiency, which eventually led to a chronic state of infection with giant holes and visibly exposed bone. He believed for a long time that aliens, or the devil, or just evil people – were conspiring to steal his legs from him. This came to a conclusion when his legs had become so bad that doctors told him the only option to survive was a double amputation, which played right into his terrifying psychosis. He refused, naturally, as it was his worst fear imaginable. We had the compassion to honor his wishes. He died soon after of anemia of infection. It wasn’t until after his death that I fully understood that all the cruelty he exhibited throughout his life was a product of his severe mental illness, and began to pity him for his own life having been stolen from him. Prior to getting sick, he had been a skilled draftsman working in nuclear power plants, and a relatively good father until I was around four years old – I remember when his mind became ill. Later in life, my hatred for him and his cruel and abusive behavior through my childhood somehow turned to compassion seeing him as a frail shell of a man toward the end of his life, and through ensuring that he was cared for as his guardian and someone he trusted, I found forgiveness and understanding.

Good Medicine for Imposter’s Syndrome

On July 21, 2023 by Jonathan ZdziarskiMuch of what we perceive about others in the workplace is their performatory character – what others are inviting you to believe about themselves; it’s an attempt to become the idealized version of ourselves by acting the part. Most of us are very competent in the field, but even still everyone gets imposter’s syndrome from time to time. For the self-taught professionals in tech, it can be the dead body we keep dragging around with us even while making advancements in the field. Some university graduates, too, have struggled with this decaying corpse that plagues the tech world. Left unchecked, it often leads to a devalued sense of self, depression, and even triggers other mental health problems – even in those whose performatory character would otherwise make them appear well put together. I got into professional tech work at the age of 16, some 32 years ago, at a small computer shop building PCs. Having never had the opportunities others had to go to college, I’ve had to grow and adapt my skillset over the span of my career. Imposter’s syndrome – and depression – has been along with me for much of my adult life. Even with what continues to be an excellent career at Apple, I’ve struggled with self-worth. Work environments can be nurturing and stimulating, and bring out the best in you; they can also be demotivating and devalue you – imposter’s syndrome can follow you around through both. I’ve figured a few things out about myself over the past 32 years that have helped me navigate some difficult environments. Nobody develops imposter’s syndrome overnight. Any sickness that is chronic requires a long term cure. There’s nothing anyone can tell you that will simply fix imposter’s syndrome; there are incremental ways to slowly recover from it though.

Oxford’s definition of imposter syndrome is the persistent inability to believe that one’s success is deserved or has been legitimately achieved as a result of one’s own efforts or skills. In tech, this usually means we feel stupid because we don’t think we have the understanding or mastery we think we should. It’s interesting, though- people tend to often feel like it’s because they’re not smart enough. We are definitely smart enough to do this job. The reason we don’t have understanding isn’t because we’re missing brain cells. One thing that computer science is good at is abstractions, and that allows us to work with and learn higher level concepts without needing knowledge of the world beneath it. One might say it’s what makes computing so great. Imposter’s Syndrome seems to prey on the benefits afforded to us by abstractions to introduce uncertainties about our abilities. But there is a way to think in such a way that allows for these abstractions to exist, where X can remain unknown and it won’t bother you, but simultaneously see a universe where X fits in.

If you look at a lot of the brightest minds in computer science, there’s a distinguishable acumen about them that goes beyond simply knowing the subject matter. They have a scientific mind; able to not only explain something, but they’re able to theorize and reason about it, and able to analogize. These are the kinds of skills that make for not only a good scientist, but a good engineer. It’s these same qualities that seem most desirable when we measure ourselves up, and often what smart assholes do such a terrible job trying to mimic. But this acumen doesn’t come from reading source code, mentoring by coworkers, or from reading The Imposter’s Handbook. These qualities come from a combination of foundational knowledge, methodical reasoning, and discipline. Things a lot of self-taught people like me don’t initially get a lot of exposure to. What I think a lot of people want to feel is that they are legitimate. That their knowledge isn’t fake or piecemeal, and that they are armed with the discipline to reason, make advancements, and solve complex problems. So here’s the pat on the shoulder: You’re probably very good at the subject matter you’re trained in, and you are no doubt intelligent if you are working in tech. Here’s the hard: The abstractions we work with in computing have allowed us to develop gaps, and those gaps make us feel really dumb sometimes. To treat your imposter’s syndrome, we’ve got to work at this.

AI is Just Someone Else’s Intelligence

On May 4, 2023 by Jonathan ZdziarskiMechanical arts are of ambiguous use, serving as well for hurt as for remedy.

Francis Bacon

It’s been a long time since I’ve worked in the field of ML (or what some call AI), and we’ve come a long way from simple text classification to what’s being casually called generative AI today. While the technology has made many advances, the foundational concepts of machine learning have remained analogous over time. ML depends heavily on a large set of training data, which is analyzed to pull out its most interesting and defining features, and this becomes the basis for training a model. The process might involve parsing text, or performing analysis like object identification or analyzing stylistic features in art. Each of these is, in itself, a smaller – but mathematical – process. I experimented with a primitive form of meta-level learning in text classification several years ago, which may help convey the general idea. This identifies “features” of the reference sample being trained. The features this process pulls out can be simple, like words in a document or pixels from a handwriting sample, though today can be more sophisticated “critical patterns” correlated to literary authorship or artistry, such as patterns within art and music composition, sometimes stored in other models. Whatever the content is, the purpose of the training algorithm is to converge patterns and correlations across the data to build a weighted or structured model. The most interesting patterns in the training data influence weights or probabilities, creating a hidden layer: millions of “gears” that converge to compute the most statistically significant outcomes. In this sense, the term “learning” is a bit of a stretch; what’s happening is more along the lines of mathematical transcription of a set of features; adjusting the weights to solve a really big linear equation. Feature selection is one of the key differences between various ML models, and why you have some constructing music, while others render art. The math is pretty consistent – more sophisticated machines like neural nets are typically trained using backpropagation and gradient descent, while other machines such as chat bots and text generators might use weighted Markov models or Bayesian networks. These approaches have been applied to everything from natural language processing and handwriting recognition, to today’s work in genome sequencing and autonomous driving. Still, these traditional forms of machine learning are not much more than a sophisticated pattern recognizer. It is largely a deconstructive process with coefficients and statistical magic.

Today’s generative AI still goes through this type of deconstructive process, but also has a formative element. Where these new approaches excel is in going beyond parsing information into a knowledge base, but now also applying a formative process to that information – what we might conflate with intelligence, but still falls short of what most would consider the result of human reasoning. To present the data in some coherent form, this involves training not just the information, but the many dimensions of that information (such as the number of different contexts a word may be used in), or in the context of constructs and critical patterns of that information (ABBA, or 1-4-5, as very basic examples), enabling it to formulate an output in the pattern of an existing set of learned reference samples. Basic linear math finds where those dimensions intersect, creating context. But even modern training approaches, such as those used in the transformer model, still require supervised testing to tell the model what bits of its output are garbage, so that the output eventually looks intelligent; it is actually closer to “filtered garbage”. So identifying the pattern of Iambic Pentameter, for example, is still an artificial process. It can be computed mathematically with a large enough data set. Scale those patterns to music, art, literature, and the more sophisticated patterns that make up our repertoire of human creativity and it is impressive – but still synthesized. Information processing is still very primitive, and lacks many of the traits of human understanding. The inability to conceive tradition, authority, and prejudice is why all of this advanced technology still leaves us with Nazi chatbots. Some would call this confirmation theory, which is an area quite underdeveloped (and the AI reading this wouldn’t disagree). Even the raw objectives of AI are based on human-engineered goals, and evaluated using performance metrics to select the best behavior. This is a very mechanical process. Certain behaviors we may view as creative tasks may in fact be simple randomness introduced into most AIs to avoid infinite logic loops. In short, a lot of what you see is quite the opposite of the autonomous, self-motivated behavior it looks like. Any good AI behaves rationally only because someone programmed good objectives into it. Garbage in, garbage out.

One of the big differences between traditional forms of ML and generative AI is the direction in which the data flows. Traditionally, inputs flow into the system for training and queries. To train traditional systems, you’d suck in “a bunch of other people’s stuff”, and it identifies all of the interesting patterns that are then compared with the input sample. Generative AI takes this a step further, and flips the switch on the vacuum cleaner – and now all of the dirt that was initially fed into the system is shot out the pipe to produce the equivalent of a digital dust cloud of the original training medium. The output of generative AI takes the critical patterns and concepts weighted during the AI’s training and applies some formative computation to produce its own reference sample as a result. Neat-o. Nice parlor trick.

With billions of dollars, this ML scales to perform impressive computational tasks. The risk of this type of system goes beyond the traditional vision of a robot building a better chair, or replacing a worker at a plant. Today’s ML systems are white collar professionals and don’t require mechanical bodies; the computational capabilities of these systems can replace a broad array of professions using the thought product of millions of humans at once – so how could anyone compete with that? No one was ever supposed to, in fact. Doug Englebart, pioneer in the field of human-computer interaction, saw AI’s value more in intelligence-augmentation (that is, IA rather than AI), as a means of assisting the worker. Corporate greed has already led to the recent misapplication of AI, using its advanced capabilities to replace, rather than to augment, humans. Hollywood’s ML generation of “extras” is a quite extreme and literal example of this. But corporate greed isn’t AI generated. AI is replacing employees for very human reasons, and little to do with artificial intelligence itself. Yet correct computer-human interfaces are a fundamental principle that many computer scientists and science fiction authors alike both fear will be broken. Should you hate AI? No, you should hate greed.

The cold irony is this: at a deconstructed level, the output of generative AI represents the collective intelligence of other people’s thought products – their ideas, writings, music, theology, facts, opinions, and so on, likely also including those who lose their job to it. This also means others’ patents and copyrighted works, either directly or indirectly. ML has proven wildly successful at identifying the most effective critical patterns and gluing them together in some coherent form that communicates a desired result – but at the end of the day, all of its intelligence indeed belongs to the other people whose content was used to train it, almost always without their permission. In the end, generative AI takes from the world’s best authors, artists, musicians, philosophers, and other thinkers – erasing their identities, and taking their credit in its output. Without the proper restraints, it will produce the master forgeries of our generation. Should we forget its limitations and begin to rely on it for information, AI will easily blur the lines between what we view as real facts and synthesized ones. Consider a recent instance of this, where an attorney got himself in hot water for citing case law that didn’t exist – AI had seemingly fabricated it, where the attorney thought they were leveraging AI to do research. Imagine the impact to future case law should courtroom outcomes be based on unchecked fictional precedent!

Since When Don’t We Bless Gay People??!?

On February 23, 2023 by Jonathan ZdziarskiHow can I curse those whom God has not cursed?

How can I denounce those whom the Lord has not denounced?

I have received a command to bless; he has blessed, and I cannot change it.

Numbers 23

Christians are expected to be a people who bless. We were commanded so, in fact. Luke 6:28 goes so far as to instruct Christians to “bless those who curse you, pray for those who mistreat you”; Romans 12:14 echoes this, “Bless those who persecute you; bless and do not curse”. The Christians closest to Jesus were instructed to bless in even the most extreme sense – those that would later torture and murder them for their very own Christian beliefs. How ironic then that bishops from the Global South Fellowship of Anglican Churches recently ousted the Archbishop of Centerbury for offering blessings to gay couples. A harmless gay couple looking to participate in the church hardly sounds like a threat to the faith – yet scripture would still insist we bless them even if they were. Anyone looking to be part of the church should in fact be welcomed with open arms. This matter is not one of a liberal or conservative posture. This is a matter of basic Christian love and grace – actually acting like a Christian.

While there’s a lot of room in the Anglican community for differences of opinion, it is within the very fabric of a Christian to be a people who bless. Christians are called to love as Jesus has loved us (John 15:12). Blessing others is one of the ways in which a Christian mimics Jesus; “If you love those who love you, what credit is that to you? If you do good to those that do good to you, what credit is that to you?” Refusing to bless others who don’t meet your bar of acceptability is not only inconsistent with the Jesus of scripture, it is exactly the kind of behavior Jesus railed against in the religious leaders of his day.

The church has taken the common practice of blessing others and wrapped it into more formal liturgy, which is fine. Building fences around these man-made constructs, however, is playing with fire, at best, and at the end of the day any theology that inhibits doing one of the most basic things that defines a Christian is just poor theology. In my opinion, such disobedience to the Christian ethos has no place in the church. What troubles me is that not only should these bishops know this, it should have been written on their hearts. We are not the gatekeepers of blessing, we are the salt of the earth.

Since when did Christianity become such an entitled religion? What the world needs is more blessing. More peace. More of God’s outpouring. Not less. I am grateful that in all of my failings as a Christian, God has still had his hand of spiritual blessing on me. It is the infinite grace of God that transforms people’s hearts and lives, yet that grace seems to all be forgotten when you put on a funny hat. It is apropos that during this Lent season, we should be remembering the ashes from which we came, and just how wretched, miserable, poor, blind and naked we all are without God. It is only by his grace that any of us should receive any blessing at all. As another Anglican bishop recently wrote, “we can only fully embrace God’s love and mercy when we come to terms with how completely unworthy of it we are.” Perhaps these other bishops – and maybe the rest of us could use a reminder from Matthew 10:8: Freely you have received, freely give.

Elon Musk Cannot Fathom Free Speech

On December 17, 2022 by Jonathan ZdziarskiI’ve recently written about the problems with social media in provoking speech and conformity, as well as the cult phenomenon that social media companies capitalize on. Elon Musk’s recent purchase of Twitter seems an apropos time to address the direct suppression of free speech.

Among Musk’s poorly thought out misadventures, he recently and rightfully reinstated the Twitter accounts of several journalists who had been critical of him in the past, whom he had previously rage-banned without warning. What’s really appalling to me isn’t that he suspended them in the first place (which was deeply troubling), but rather the guise under which he reinstated them. Like many of his twit-decisions, Musk started with a Twitter poll, regarded as having roughly the same credibility as a Russian election. This was followed with a decree that “the people have spoken”, referring to the disenfranchised twelve year olds, Russian trolls, and bots that vote on Twitter. Musk uses this business strategy, which cost $44 billion in research, whenever he wants to make a public policy decision that doesn’t involve putting people out of work. This policy-related polling seems almost an attempt to make the Twitterverse feel empowered by the new CEO.

Yet while Musk might have his users believe that they are now participants in the free speech narrative, the very concept of free speech itself is at odds with – even downright hostile to the notion of crowd-sourced policy. The Bill of Rights was designed intentionally to “prevent a sheep and two wolves from voting on what’s for dinner”. It seems to elude Musk that the right of free speech exists at a level higher than himself; that, rather than handing it out by vote, he is a mere steward of it with the responsibility of defending it. The Twitterverse at large has not and should not be empowered to make decisions about what speech to permit, because doing so destroys free speech. Failing to understand the requirements of such a basic human right is a dangerous thing for someone dictating policy of any system that depends on it. Musk, rather, seems to lack either the capacity or the restraint to make responsible decisions about free speech, or how to distinguish free speech from misinformation (today’s “Fire!” in a crowded theatre). Musk’s inability to handle such a delicate instrument of civil society is truly terrifying given the sheer amount of unilateral power he now has over public discourse.

Twitter was already a sick animal when Musk took over not long ago; the idea of giving a popular vote on speech policy to all users is not just the adolescent prank it looks like, but stands to set a dangerous norm across all social media platforms unless users push back on such an offensive thing. A society that believes the people should be allowed to choose what speech is acceptable is a society that burns books and compels conformity. Musk is simply taking the first step by normalizing this type of behavior among the online community. Anyone who is a free speech advocate should be condemning, not participating in it. If Musk doesn’t start to apply his brain here rather than his ego, Twitter 2.0 could very easily resemble German Student Union 1.0. Empowering children over others was how things started to go wrong back then too.

I had struggled to propose a solution to this problem, at least as far as Twitter is concerned, and then awoke to the most appropriate and fitting news on the subject: Musk created another poll, in which Twitter users voted he resign his post as CEO. It seems he occasionally does poll before putting people out of a job.

On Abortion and False Piety

On November 16, 2022 by Jonathan ZdziarskiThe priest shall bring her and have her stand before the Lord. Then he shall take some holy water in a clay jar and put some dust from the tabernacle floor into the water. After the priest has had the woman stand before the Lord, he shall loosen her hair and place in her hands the reminder-offering, the grain offering for jealousy, while he himself holds the bitter water that brings a curse. Then the priest shall put the woman under oath and say to her, “If no other man has had sexual relations with you and you have not gone astray and become impure while married to your husband, may this bitter water that brings a curse not harm you. But if you have gone astray while married to your husband and you have made yourself impure by having sexual relations with a man other than your husband”— here the priest is to put the woman under this curse—“may the Lord cause you to become a curse[d] among your people when he makes your womb miscarry and your abdomen swell. May this water that brings a curse enter your body so that your abdomen swells or your womb miscarries. Then the woman is to say, “Amen. So be it.”

Documented use of an Abortifacient, Numbers 5:16-22

In May 2022, white evangelical Christians woke up to some rather unexpected news. A draft opinion had somehow leaked out of the Supreme Court, suggesting that Roe v. Wade would soon be overturned. Shortly after, it was. I single out white evangelicals here because, according to a recent Pew Research study, they are twice as likely to want to see abortion outlawed than other Americans (including other Christians). It would be an error though to conclude this means white evangelicals are the most pro-life. No no no, this is not the case at all. White evangelicals are no more pro-life than other religious groups, Christian or otherwise – they are, however, the most autocratic. Yet those who would use the Bible to institute government sponsored morality seem to have forgotten where the bodies are buried: also in their Bible.

The concept of abortion is nothing new. The practice of inducing an abortion as punishment for unfaithful women was once conducted as part of priestly duties in pre-Christian Judaism. A woman suspected of adultery, yet maintaining her innocence would be partially stripped, treated as an animal (right down to the presentation of an animal’s meal offering), and made to drink a type of holy water concoction; it was believed an unfaithful woman would abort her lover’s fetus and die within up to three years were she guilty (Mishnah Sotah 3). Holy water has a long tradition of being used to cleanse and purify, and so the implication was that the illegitimate fetus was evil, and therefore must be purged from the woman. Behind the scenes, this seemed to have more to do with the financial aspects of marriage contracts and intimidation than it did holiness, and the practice was eventually ended prior to the destruction of the second temple. Today’s American evangelicals take the opposing viewpoint of their ancestors – namely, against all forms of abortion – yet still firmly hold onto the practice of controlling women in much the same way. Yet while many other Christians value life just as much as autocratic evangelicals, we differ greatly from them particularly on a solution to the number of unwanted pregnancies in the country. The earliest Christians opposed abortion by adopting others’ discarded and unwanted live babies – a Roman practice known as “infant exposure” would leave abandoned babies in the trash or otherwise discarded after birth, left to die or be raised as slaves and prostitutes by others. It was this practice that many early writers condemned as “the worst abomination of all” (Philo of Alexandria). They wrote about Roman abortion practices far less. Yet while early Christians put their faith into action by sacrificially taking in these babies to save them from such a fate, today’s evangelicals largely believe opposing abortion through politics and legislation is the only solution. Most others believe it is an ineffective and dangerous solution – perhaps just as dangerous as the ancient practice that once caused them (or at least was perceived to; the practice’s effectiveness was highly questionable among rabbis).

Forced morality is likewise nothing new either. In the book of Chronicles, King Josiah breaks down the altars of false gods, tears down carved images, and rids Judah and Jerusalem of the ungodliness of the time. When his priest finds the Book of the Law, Josiah tears his robe and imposes moral rule according to the laws of the book. The chronicler Ezra writes, “Josiah removed all the detestable idols from all the territory belonging to the Israelites, and he had all who were present in Israel serve the Lord their God. As long as he lived, they did not fail to follow the Lord, the God of their ancestors.” An often overlooked detail in this story is that in spite of a society living under (and clearly practicing!) moral law, God tells Josiah that he will take his life early so that he will not see the disaster God plans to bring about. A useful object lesson can be found here: perceived morality counts for little when it is compelled. At the center of today’s controversy is not really Christian doctrine at all (there is no Christian doctrine concerning abortion), or even morality, but rather the same desire for power; today, that translates to the church’s desire for socio-economic power.

Evangelical Christianity is Broken

On November 15, 2022 by Jonathan ZdziarskiIn the beginning wickedness did not exist. Nor indeed does it exist even now in those who are holy, nor does it in any way belong to their nature.

Athanasius, Against the Heathen

I’ve devoted much of the past 30 years as an evangelical Christian “layperson” to Christian studies to try and become an educated one. Greek, theology, the patristics, and Christian history should be in the wheelhouse of every Christian, yet many never study their own religion, and merely live confined to the prison of their own prejudice. Most Christians can’t tell the difference between culture and doctrine, and often conflate the two. It is, therefore, of little surprise that what Christianity has become in America is more or less a product of a news cycle, and less about a gospel of a meek savior. Evangelical Christianity in America broke in 2020, though perhaps some would say it’s been broken longer.

Ever since, the church stopped being recognizable – even to many Christians – in her embrace of racism, hostility, and misinformation that many Christian believers proliferate. It often failed to resemble a church at all, but rather a counterfeit designed to resemble Christianity in name only, almost certainly alien to what was truly being worshipped. The year 2020 brought some of the worst out in the mainstream evangelical church – relatives, friends, and people I’ve grown up with – who were once a much-needed example of Christianity to me – have severely disappointed in how they’d conducted themselves, causing me to question if they ever truly understood their own faith.

The Art of Understanding

On July 4, 2022 by Jonathan ZdziarskiWe cannot understand without wanting to understand, that is, without wanting to let something be said… Understanding does not occur when we try to intercept what someone wants to say to us by claiming we already know it.

Hans-Georg Gadamer

Users of social media are attracted to platforms supporting free speech and open communication. The business motivations of social media are too, but for a different reason. A social media company’s valuation is largely driven by user activity metrics, from which advertising and media value are derived. The free speech that users value often turns out to be provoked, induced through controversy or cult phenomenon. Platform disruptors help drive up user activity by provoking speech, which benefits the value of the platform. The more disruptors a platform has (and the more freedom they’re given), the more controversy and virality will exist to improve those metrics that drive valuation. Provoked speech isn’t really free. The consequences of a platform engendering controversy and virality can be seen in the obvious de-evolution of social norms online: civility is rare, cruelty is ever increasing, and understanding no longer has the currency it once had. Outrage pays.

Understanding is key to any civil society. In America, we usually don’t take the time to understand one another anymore, particularly online. Without fully appreciating someone’s perspective, we usually end up seeing others through our own universe of norms; through our “own lens” as one might say. But it is that person’s own culture, knowledge and norms that influence their prejudices, their beliefs, and their treatment of a subject. Their experiences – not ours – formed their views. The only correct way to understand someone then is through their lens, treating our own as an impairment begging for a corrective prescription.

One of the great modern philosophers Hans-Georg Gadamer saw the study of hermeneutics as a means of gaining understanding of “the other” through an effort to transpose a person’s experiences, prejudices, and culture in a way that it could be uniquely appreciated despite the narrowness of our own. Think of it as a translation problem. When the effort is successful, there is a broadening of horizons to better understand how “the other” formed their network of beliefs, free from our own prejudices and norms. The rather sterile and parochial word hermeneutics might remind you more of Sunday School than social media, or more the type of legal research often used to interpret historical law than explain the psychology of a news cycle. If you were to consult college texts, you’d walk away quite certain that hermeneutics has nothing to do with everyday life and is the thing of dry people doing even drier historical things. Yet the doldrum historical sciences that employ hermeneutics have been grasping at the same basic goal to understand, which we often lack in social media.

How to Write Meaningful Assault Weapons Legislation

On May 24, 2022 by Jonathan ZdziarskiI originally published this in 2012, after the Sandy Hook shooting, and dust it off every time there’s a random mass shooting in the news. This post has seen the top of my feed year after year, as politicians continue to offer nothing, failing the majority of Americans who want to see new laws passed on firearms, prioritize mental health care, and integrate police more closely with schools to protect children, rather than compete with Wal-Mart to protect merchandise. I am deeply saddened by the recent mass shootings in the news, but even more saddened at what ineffective, impotent leaders we continue to elect in this country year over year.

I’ve been a long time responsible gun owner, by the old definition of what that used to mean. Like a majority of them, I’ve wanted more controls on semi-automatic rifles – particularly, assault rifles, for a long time. There’s idiocy on both sides of this debate, and both have some questionable notions about them. The extreme left seems to have developed an irrational fear and hatred of all guns and the extreme right ignorantly believes the only solution to guns are more guns. Consider this more sensible perspective from someone who spent over a decade shooting and working on guns, held NRA certifications to supervise ranges and carry concealed weapons, and up until some years ago – when I sold the rights to it – produced the #1 ballistics computer in the App Store.

While often obscure to most, there is – today – a system in place to perform intensive checks of individuals looking to own firearms categorized as highly lethal; the problem is it isn’t being used to control most assault rifles. Introduced in the National Firearms Act legislation, this system was applied to machine guns, short barrel rifles, silencers, sawed off shotguns, and other types of firearms that individuals can still legally own today, but with more than the casual regulation of AR-15s and other firearms. It could be changed to include semi-automatic rifles with the stroke of a pen. In my opinion, it should be, and in this post I’ll argue why I’d like the President and legislators push for this.

Edward Snowden in Hindsight

On March 22, 2022 by Jonathan ZdziarskiI only regret that I have but one life to lose for my country.

Nathan Hale

On the day of Nathan Hale’s execution, a British officer wrote of Hale, “he behaved with great composure and resolution, saying he thought it the duty of every good Officer, to obey any orders given him by his Commander-in-Chief; and desired the Spectators to be at all times prepared to meet death in whatever shape it might appear.” Nearly ten years ago, I viewed Edward Snowden as a slightly nerdier, yet similar patriot to the greats. I wanted to believe he was serving his country, and was unfairly targeted by the state for standing up for those beliefs. Much of tech did too, which is why this is an important discussion to have. It’s affected how the tech community views and interacts with government in many ways, with all of the prejudices it brought into play. For all the pontificating since then about freedom that Snowden has done, his taking up permanent citizenship in Russia, and his silence since the beginning of the war with Ukraine (except, more recently, to criticize the US once more), today I rather see the pattern of a common deserter in Snowden, rather than the champion of free speech that some position him as. If Snowden is to set the narrative for how tech views and responds to government, then our occasional criticism of his own behavior should be fair game.

During his time in Russia, we have seen the whistleblower system work effectively here at home. The details of Trump’s Ukraine call, and the subsequent freezing of security aid seems rather relevant today. More impressively so, this same whistleblower system Snowden criticized worked against a sitting president having no capacity for restraint. The fruits of it were significant, and the process brought both public dissemination and a full press by congress to protect the whistleblower. Mr. X, whose identity is still somewhat contested, was a hero. He stood up to the bully, knowing better than most how lawless the tyrant was, and of the angry mob he commanded. What happened to X? Very little, certainly far less than the charges Snowden brought on himself or the freedoms he gave up by not using the right channels. Instead of following process, Snowden fled the country under the Obama administration, who was a teddy bear compared to Trump. Snowden rejected this government process, insisting the whistleblower system was corrupt, using it as justification to leak classified documents, shortly before departing the country. In 2020, he asked us to excuse him again while he applied for Russian citizenship “for the sake of his kids”. Yet even in being proved wrong by a true hero like X while the country lived under a tyrant, Snowden continues to hide from the consequences of this terrible miscalculation.

Christianity’s End-Times Conspiracy Theories

On January 1, 2022 by Jonathan ZdziarskiWhat more is there for their Expected One to do when he comes? To call the heathen? But they are called already. To put an end to prophet and king and vision? But this too has already happened. To expose the God-denyingness of idols? It is already exposed and condemned. Or to destroy death? It is already destroyed. What then has not come to pass that the Christ must do?

Athanasius, On the Incarnation

Christianity introduced me to a God who interacted with humanity to offer a life greater than myself. This made a lot of sense to seventeen-year-old me. It still does. Christianity in America comes with a lot of baggage, though. Along with the powerful message of the gospel come a lot of strange ideas about the creation and destruction of the world. Depictions of a violent and terrifying last days are often portrayed in both Hollywood fiction and from the pulpits of American churches. I spent many of my younger years friend to a fireball end-times preacher, who sadly died of COVID recently. Having been immersed in a church community with end-times motifs often present, it became apparent over time that evangelical Christianity seemed to have conflated faith with magic, losing touch with historical Christian beliefs. Modern interpretations of end times prophecy have become increasingly more embellished within many churches, incorporating new themes from current events into a sort of theological composite to explain present-day unrest. Such theories divorced the pattern of a historical Jesus, who advocated non-violence, with one now seemingly the perpetrator of pointless violence, judgment, and terrifying death. These beliefs have altered the entire world view of the evangelical church to adopt a militant, warfare-influenced mindset.

The concept of a violent and militant Jesus probably had its origins in the medieval period1. The idea was first codified at the Council of Nablus in 1120, where Canon 20 permitted a clergyman to take up arms in self-defense without bearing any guilt; this was during turbulent times when Christian pilgrims were often massacred by the hundreds along their journey, leaving their rotting corpses along the road from Jaffa into the Holy Land. This one concession, intended to be a temporary measure, seeded militant movements in Christianity starting with the Papal legitimization of the Templars movement (“God’s Holy Knights”), extremist groups such as Alfonso I’s Brotherhood of Belchite, the Pastoureaux, and now reaches into modern day militant Christian ideals. End-times theories today evolve within evangelical churches to reinterpret current events into an apocalyptic context. They attract fringe groups with similar mindsets, as they include the same elements – oracle-sourced apocalyptic theories that lead to violent, anti-establishment outcomes. At the very least, today’s evangelical end-times worldview gives cover to white supremacy, replacement theory, and anti-government extremism. Yet this is in conflict with the teachings of Christ and hundreds of years of church fathers about martyrdom, pacifism, and government non-involvement. The obvious contradiction of a Christianity asserting a struggle that is “not against flesh and blood” somehow ending up with a literal war against flesh and blood is the result of a theological evolution that influenced how the church interprets scripture and forms doctrine today. To not believe in a brutal and imminent end times means, in many churches, that you don’t have a Christian faith at all.

Theories about masks, vaccines, the World Health Organization, and a new president are popular topics of recent end-times discussion within churches. The idea that anyone can speculate on end-times prophecy has attracted conspiracy groups like QAnon, which now represents up to 25% of white American evangelicals. Denominationalism, while having some benefit, has also become a significant enabler of confirmation bias in the church, allowing for tribal systems of otherwise fringe beliefs to find support. These beliefs have become more extreme as a result of the social dysfunction created by COVID and deep divisions in politics. Beliefs about masks, vaccines, and other current topics are now loosely joined to end-times concepts of one world government, the mark of the beast, eternal punishment, or other themes in Revelation. Conspiracy theories within the church’s walls have had very real consequences. A study from the Public Religion Research Institute (PRRI) showed that only a mere 41% of white evangelicals believe scripture provides no reason to refuse the COVID vaccine – that’s 59% of white evangelicals who think otherwise. The same polling organization found that 18% of all Americans believe in the QAnon conspiracy the “government, media and financial worlds in the U.S. are controlled by a group of Satan-worshipping pedophiles who run a global child sex-trafficking operation”. The most extreme example of end-times prophecy going off the rails was seen on January 6, where insurrectionists attempted a coup within the congress, driven by QAnon conspiracy theories. As one evangelical pastor put it, “Right now QAnon is still on the fringes of evangelicalism… but we have a pretty big fringe.”

The modern-day evangelical end-times posture can be walked back to a shift in theological interpretation of the mid-1800s. The interpretive biases that posit this theology have altered Christianity in many significant ways. Yet concepts of a sudden secret rapture, seven years of tribulation, and a thousand-year earthly kingdom all rest upon theological pillars of highly questionable origin. Such last days concepts have no support in historic Christianity, and could be divorced from Christianity altogether. Many evangelicals, having been raised in this mindset, will deny vaccines and literally die on the basis of the theological system under which they were taught, firmly believing that they are honoring God in doing so. Yet it is a flawed and unfalsifiable system of theology – not Christianity itself – that is to blame. Let us attempt to tease those two concepts apart.

On Christianity

On December 25, 2021 by Jonathan Zdziarski“For no property of God which the mind can grasp is more characteristic of Him than existence, since existence, in the absolute sense, cannot be predicated of that which shall come to an end, or of that which has had a beginning, and He who now joins continuity of being with the possession of perfect felicity could not in the past, nor can in the future, be non-existent; for whatsoever is Divine can neither be originated nor destroyed. Wherefore, since God’s eternity is inseparable from Himself, it was worthy of Him to reveal this one thing, that He is, as the assurance of His absolute eternity.”

On the Trinity

St. Hilary of Poitiers

I’ve often been asked why an intellectual type guy such as myself would believe in God – a figure most Americans equate to a good bedtime story, or a religious symbol for people who need that sort of thing. After about 30 years of life as a Christian, my faith in God is the only thing that’s peeled me off the pavement through many hard times in my life, and helped keep me grounded during COVID. What God has to say about me – as a human – having intrinsic value , and deserving love (even in times when I didn’t love myself), is likely the only reason I hadn’t pulled the trigger a few times in my life. But it is far from a crutch; it has pushed me to conquer my own selfishness as a human, to learn to forgive, to suffer myself to be defrauded for the sake of my testimony, and to serve something greater than myself. Striving to understand God, especially through all of the American nonsense that is in the church today, has been a thought provoking and captivating journey as well. I wasn’t raised in a Christian home, nor did I have any real preconceived notions about concepts such as church or the Bible. I didn’t really understand Christianity at all through my youth, other than from the perspective of an outsider – all I had figured was that he was a religious symbol for religious people.

Today’s perception of Christianity in America is that of a hate-filled group of racists that are too stupid to take a vaccine. A title that many so-called Christians have rightfully earned for themselves. This doesn’t represent Christianity any more than the other extremes do, though, and even atheists know this. There is a real standard we are called to meet as Christians, and much of this country has fallen short. It doesn’t mean that God isn’t who he said he is, and it doesn’t move the bar of accountability for those that profess to be Christian. There are countless people who are not of this stereotype, who strive to love and to do good, who won’t judge you, and who try their best to walk out a life worthy of the Christian faith.

I’ve been a Christian since 1993, and am convinced, based on my experiences and my understanding, that God is more than just a story. But it takes looking outside of the white American evangelical culture that’s often portrayed as Christianity to understand what God is about. I think most people already know in their heart who God is, and that’s why they’re so averse to the church. In recent times, there has been a cognitive dissonance between historical Christianity and the way the church behaves. Christians are equally mystified by this – but it does not invalidate everything that’s been written about God.

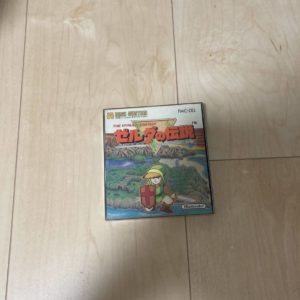

CSI Zelda: Examining Counterfeit Famicom Disk Games

On November 5, 2021 by Jonathan ZdziarskiI’ve previously written about auditing a graded video game, and some of the techniques that can be used to authenticate them. Now, I bring to you a wonderful opportunity to demonstrate what some counterfeit games looks like, and how to spot one. It was a cold December day, when I came across an auction on Yahoo JP by seller hiroki888dorakue: a sealed Legend of Zelda (Zelda no Densetsu) Famicom game listed as “new” and “unopened”. Not only new, but this item has the coveted yellow “Disk System” text in the upper left corner, which only exists on early issue versions (v.0) of the game. For those who aren’t familiar with Famicom, Nintendo released the Famicom system in Japan prior to the US version known as the “NES”. The Japanese version of the “NES” was way cooler than what we had, and had many accessories that our American systems didn’t – 3D glasses (Rad Racer and Falsion look great), a keyboard with BASIC, a revolver (explaining the western theme of games that were strangely released in the US with a futuristic Zapper gun), and the beloved Famicom Disk System. Many popular titles were initially released on the Disk System before they landed in the United States in NES cartridge form factor. Legend of Zelda, released in the US in August 1987, was first released on disk in February 1986 in Japan. The Disk System had many neat features, including a PCM sound channel, giving this first version of Zelda a superior soundtrack. I own three additional copies of this game, two with the yellow text and one with the white text, a change Nintendo made in later production runs.

The Famicom Disk System made it relatively cheap to get a new game. Nintendo set up Famicom Disk Writer kiosks across Japan, where kids could put down a few Yen and get a brand new game written on their old disks. They would also be given a fresh set of labels for the game. This service, which was very awesome if you were a kid, became very popular in Japan until Nintendo discontinued it due to heavy piracy. Unfortunately, the ability to easily copy and relabel disks is also one of the many reasons counterfeiting Famicom Disk System games is so easy.

Today, there are numerous collectible counterfeits of popular (and expensive) titles on the market. A typical counterfeit looks like a brand new, sealed copy of a title but may actually have a fake seal, reproduction inserts, and possibly even a disk that used to be something mundane, like Golf, relabeled with fresh Disk Writer or reproduction labels. In this post, I’ll take a look at a few such counterfeits and point out some of the ways to detect them in your own collection.

The seller of this Zelda title had 70 positive reviews and only one negative review, which would lead some to believe he’s trustworthy. Most Japanese proxy bidding sites, however, often require hundreds of positive feedbacks before they’ll even allow you to buy from a merchant. There are other problems on the American auction sites. For example, user geisha-export has sold me a few counterfeits in the recent past, but when eBay issues a refund, the seller can have their negative feedback removed. As a result, no one knows that some of these sellers are cashing in on fakes.

Auditing a Graded Video Game

On November 4, 2021 by Jonathan ZdziarskiAnyone who’s read my blog knows that I am not a fan of video game grading. Grading companies, in my experience, do marginal quality work, and at a superficial level that cannot be audited once an item has been sealed. The holy plastic WATA box is all too often used to convince sellers that their item somehow has more value than it actually does, and buyers the frustration of passing over finds because of greedy sellers who drank the kool-aid. Overall, video game grading has done more harm to the hobby than good.

I was lucky enough to find one seller who must have been frustrated that their VGA graded game hadn’t sold for the inflated prices they were led to believe they could get for it, and so I made a reasonable offer on it based on what an ungraded sealed copy would cost me. They accepted. I decided to use this as an experiment to crack open the enclosure and audit VGA’s work, and thought I’d share my findings so that the community would know what to expect a graded game actually looks like behind the plastic.

Authenticating Early Nintendo Systems and Games

On September 9, 2021 by Jonathan Zdziarski“How can you have money,” demanded Ford, “if none of you actually produces anything? It doesn’t grow on trees you know.” “If you would allow me to continue.. .” Ford nodded dejectedly. “Thank you. Since we decided a few weeks ago to adopt the leaf as legal tender, we have, of course, all become immensely rich.” Ford stared in disbelief at the crowd who were murmuring appreciatively at this and greedily fingering the wads of leaves with which their track suits were stuffed. “But we have also,” continued the management consultant, “run into a small inflation problem on account of the high level of leaf availability, which means that, I gather, the current going rate has something like three deciduous forests buying one ship’s peanut.” Murmurs of alarm came from the crowd. The management consultant waved them down. “So in order to obviate this problem,” he continued, “and effectively revalue the leaf, we are about to embark on a massive defoliation campaign, and. . .er, burn down all the forests. I think you’ll all agree that’s a sensible move under the circumstances.” The crowd seemed a little uncertain about this for a second or two until someone pointed out how much this would increase the value of the leaves in their pockets whereupon they let out whoops of delight and gave the management consultant a standing ovation. The accountants among them looked forward to a profitable autumn aloft and it got an appreciative round from the crowd.”

Douglas Adams, The Restaurant at the End of the Universe

Ask any frustrated retro-gamer, and they’ll tell you the past couple of years have seen a fake market bubble to jack up game prices. What appear to be credible allegations of fraud and collusion have surfaced between grading companies and auction houses, such as WATA Games and Heritage Auctions, which hopefully will mean fair prices will start to return to a hobby that was previously only frequented by hardcore nerds, rather than investors. But along with this fake gaming bubble came another new phenomenon: fake, high dollar “premium” Nintendo collections. One particular peeve of mine is the introduction of fake “test market” NES sets appearing on auction sites. A “test market” system is a reference to the first hundred thousand units sold as part of a limited release in 1985, before Nintendo knew whether the consoles would be viable. Nobody wanted to carry video games after Atari crashed the market in 1983, and so Nintendo USA, without telling their Japanese parent company, promised retail stores a refund for any unsold systems and a 90 day line of credit. They ended up selling nearly 62 million consoles. Those first 100,000 trial market systems are now considered by collectors to be the Holy Grail.

They’re also fraught with fraud, due to the prices they can fetch, especially if you find one graded. Many fraudulent test market systems include a few genuine components from the original box, but were either missing parts or pieced together. Because they came with the full caboodle – the Zapper, R.O.B., controllers, and two games – a lot of pieces can get lost or broken over time. The replacement parts included at auction often include retail parts from after Nintendo’s worldwide release, severely diminishing their value. Any test market system today could easily include post-release cartridges, light guns, robots, controllers, manuals, boxes, or even circuit boards; buyers and sellers generally believe there’s no way to tell the difference. All too often, someone will buy an empty test market box and throw something together with junk from eBay, selling a $200 system for thousands. In some extreme cases, even the original NES main board would be swapped out for a release board, leaving the only authentic parts the plastic shell! Such fraud can happen with individual games too. These shenanigans ruin the legitimacy and the value of the asset. Fakes have always existed, but with the inflated prices sellers think they can get these days, hobbyists and collectors stand to lose a lot more money than ever thought. Up until recently, test market systems have been considered “a real treat” when found in great condition, but thanks to a manufactured gaming bubble, they’re now fetching big money – and with that comes a lot of people looking to rip you off.

The Only Winning Move is Not to Play

On July 8, 2021 by Jonathan ZdziarskiLittle fanfare has been given to the story of a glitch in an experimental AI game from 2019, but the results seem rather poignant to me. To summarize, the AI decided that committing suicide at the beginning of the game was the best strategy because the game was too hard, and it meant fewer points

Biden Should Take the White House off of Twitter

On December 23, 2020 by Jonathan ZdziarskiThe Biden administration is having a little Twitter fight about whether or not to reset the followers of the @potus account. While followers were rolled over from the Obama administration to Trump’s, the Trump administration, who views Twitter followers as if they represented actual voters-who-love-Donald, doesn’t think the incoming president should get to inherit all of those bots and disenfranchised twelve-year olds. Let us stop and reflect on the stupidity and pettiness of this argument. What the Biden administration really should be thinking about is whether to close @potus and get the White House off of Twitter completely.

Social media, especially Twitter, has year after year been on a steady course of devolving into one of the most toxic and unpleasant public gatherings on the Internet. Long before Trump took office, social media was the leading source of disinformation, threats, harassment, toxicity, and division. Combined with a platform that adopts thought-terminating loaded language hash tags (e.g. #StopTheSteal) and abbreviated messaging that lacks critical thought, Twitter has long been a platform designed to capitalize on the cult phenomenon. Twitter has been not only markedly complicit, but in a position to profit off of the toxicity, disinformation, and abuse it allows by the Trump administration and other public officials who’ve started emulating the behavior.

PSA: Someone is Impersonating Me Online

On December 9, 2020 by Jonathan ZdziarskiOver the past few months, a small group of individuals have been impersonating me online using fake email addresses, shell accounts, and other mediums. These individuals are skilled at social engineering, and are also criminally dangerous. So far, the purpose seems to be attempts to gain access to confidential information, and to create proxied (MiTM’d)

Truth is not Partisan

On November 18, 2020 by Jonathan ZdziarskiIf you watched yesterday’s senate judiciary hearings with CEOs from Twitter and Facebook, two things would have stuck out to you. First, why is Jack Dorsey addressing the senate from the kitchen department at an IKEA? Second, how did a judiciary hearing about misinformation campaigns somehow turn into a misinformation campaign itself? At the heart