On the Psychological Demands of Working From Home

On March 22, 2020 by Jonathan ZdziarskiAs the angst and stir-craziness start to set in from the world suddenly being forced into lockdown, I’ve seen a lot of articles about working from home, by people in all walks of life, from programmers to astronauts. Most of them offer practical beginner advice, like go outside, plan a schedule, etc. etc. That’s all good advice to take in, but after a few weeks, you’re probably realizing there’s a lot more to making this work well. As the reality of our predicament is starting to sink in, it’s important to start thinking about the psychological demands of working from home. I’ve spent the better part of my 25 year career working from home, and when I started thinking about what, if any, wisdom I could share on how to make it work well, found that I’d come up with a lot of the same things I’d already shared in a post two years ago, Living With Depression in Tech. Working at home has some fantastic benefits, but also challenges that go far beyond basic discipline development. Being productive and successful at home comes down to changing your perspective – focusing on the impacts you’re having, believing in what you’re doing, and finding ways to grow and thrive on your own so that you can maintain your drive over the long haul.

Presidential Policy Directive 19

On September 26, 2019 by Jonathan ZdziarskiIs anyone surprised the Obama-era whistleblower directive put into place actually worked? I bet Edward Snowden is. Not only did it work, but Congress wouldn’t have given it such weight had the information been otherwise leaked in a Snowden or Manning-esque style, nor would the IG have had the chance to acknowledge the information as

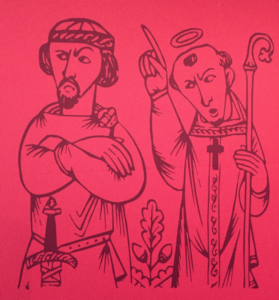

Christianity and the Cult Phenomenon

On August 1, 2019 by Jonathan ZdziarskiJoshua Harris, the author of “I Kissed Dating Goodbye”, recently renounced his faith and apologized for his awful book. I remember when it came out in the late 90’s, and still see the lasting damage it inflicted on two generations of young men and women. Harris ended up creating a toxic culture inside the mainstream church that would take two generations of Christian men back into the dark ages of devaluing women based on their level of sexual indiscretion, and helped fan the flames of homophobia and exclusion. His “sexual prosperity gospel”, as it’s been called, led to a life of guilt and shame for many, and created lasting scars that caused some to abandon their faith or their marriages later on in life.

Christianity teaches that a person’s worth has nothing to do with their sexual history (or orientation), but from Jesus, who was willing to die to reconcile humanity to God. We’re not defined by our sins, and we’re not defined by our past; we are defined by Christ. This is a far cry from the cultish fundamentalist legalism that Harris’s church taught for decades; the purity movement amounted to nothing more than a way for Christians to measure themselves and others up. It’s no surprise that Harris renounced his faith; if the faith he was practicing was grounded in such a flawed understanding of grace and intrinsic human worth, then by any measurement it was not Christianity. The truly sad part is that he convinced millions of Christians to adopt this same world view for more than 20 years, allowing it to hurt a lot of people before it became popular for leaders to finally speak out against it. Sorry, Josh, but an apology doesn’t let you off the hook.

But this failure wasn’t just of Harris’s own making: It was the complete failure of church leaders everywhere in elevating Harris’s status to a Christian leader. Harris was a mere 21 years old, and hadn’t even been to seminary yet when he wrote the book. Rather than rightfully dismissing his book as yet more of the trash writing of that era, the inexperienced youth leaders of that time (many of whom also lacked formal training) saw a way to get kids to act responsibly, without considering the consequences of his legalism. From piecing together accounts online, Harris’s own church reeked of a world of deep-seated problems, including sexual abuse coverup, abuses of power, control and manipulation of their congregation, and legalism running rampant. The church had become so damaging, much of his congregation ended up leaving, and there’s an entire blog dedicated to victims trying to recover from Harris and the rest of his church’s leaders. Indeed, it’s very telling to see the kind of culture his book came out of, and the horrifying fruits of it. When you read that Josh Harris has departed Christianity, this appears by all accounts to be a very good thing for Christianity.

Living with Depression in Tech

On August 22, 2018 by Jonathan ZdziarskiI’ve been trying to avoid writing about depression for a while now. Almost nobody in tech wants to talk about things like this. A stigma still very much exists around mental illness, and in tech with all its flaming, trolling, and fragile manhood egos, people have learned to be thick-skinned. It’s taken me years to realize that I never stopped struggling with depression throughout my dysfunctional childhood, and I’ve carried it through my teens and adult life with me. I was diagnosed and medicated as a teen, but didn’t fully understand that it still haunted me, playing the same old record grooves in my brain in adulthood. As my thyroid disease began accelerating, I needed to work even harder to maintain balance or the world would come crashing in. Struggling through my career and relationships, things became easier after I understood what was going on inside of me. I feel a certain responsibility to bring to light what is likely a widespread issue in the tech community.

Depression can manifest itself in various forms for different people, and my story isn’t “everyone’s” story. I can only write from my own personal experiences. Most of this has had lifelong personal struggles unrelated to work, and while one can probably deduce this, the focus of this post is handling professional challenges. You might identify with some of these issues, and that’s great if this post helps, but it also shouldn’t be used for self-diagnosis. Depression has been far worse than the details I’m willing to share publicly, and if you think you may be depressed, you should seek professional counseling.

I have no background in psychology; I’m just sharing what works for me. I have no background in medicine either, and having been on and off medication, I can’t recommend one way or the other. I do know that all medication has its limits, so learning how to cope is an important part to having a complete life plan. At the end of the day, I can’t solve your depression (or mine), but I can share how I’ve coped with it, and won some victories. This is a survival story that hopefully might have some meaningful advice for others.

How Social Media Changed Us

On March 23, 2018 by Jonathan ZdziarskiThe current young generation will soon have grown up without ever knowing what it’s like to not have social media. They’re also growing up without a sense of how society was before social media came into play. Whether you use social media or not, it’s likely affected your life because it’s changed how people relate to one another – including you. While there are many good aspects of social media and the concept of bringing people together, there are also many negative changes it’s had on how we relate to one another.

I’ve spent a lot of time observing others and how social media has affected them online over time, and seen the problems it can create. For me personally, I’ve never been happier to be off of social media than the past year or so when I finally ditched Twitter for good. Twitter is a creepy and toxic place, which seems to be exactly what their CEO wants it to be. I found that I didn’t like the person I had to become in order to stay on it. Most social media is a dumpster fire, but Twitter was a particularly awful experience. It simply isn’t worth the stress and distraction in order to relate to a bunch of randos on the Internet whose only goal in life is to cause misery. Social media doesn’t deserve to have the power to change you, but they do. Getting back to the humanity of relationships is almost like waking up from a bad dream: you’d almost forgotten the goodness in what normal relationships with others (professional, friendships, etc.) feels like.

So at the risk of the next generation never knowing what it’s like to have a normal relationship with others, I’ve written down just a few of the things that are important in building friendships and other types of relationships – things social media seems to have endangered… at least, from the perspective of this old Gen-X’er. Writing all of this makes me really miss how people were before social media existed.

Joining Apple

On March 14, 2017 by Jonathan ZdziarskiI’m pleased to announce that I’ve accepted a position with Apple’s Security Engineering and Architecture team, and am very excited to be working with a group of like minded individuals so passionate about protecting the security and privacy of others. This decision marks the conclusion of what I feel has been a matter of conscience

Attacking the Phishing Epidemic

On February 16, 2017 by Jonathan ZdziarskiAs long as people can be tricked, there will always be phishing (or social engineering) on some level or another, but there’s a lot more that we can do with technology to reduce the effectiveness of phishing, and the number of people falling victim to common theft. Making phishing less effective ultimately increases the cost to the criminal, and reduces the total payoff. Few will argue that our existing authentication technologies are stuck in a time warp, with some websites still using standards that date back to the 1990s. Browser design hasn’t changed very much since the Netscape days either, so it’s no wonder many people are so easily fooled by website counterfeits.

You may have heard of a term called the line of death. This is used to describe the separation between the trusted components of a web browser (such as the address bar and toolbars) and the untrusted components of a browser, namely the browser window. Phishing is easy because this is a farce. We allow untrusted elements in the trusted windows (such as a favicon, which can display a fake lock icon), tolerate financial institutions that teach users to accept any variation of their domain, and use a tiny monochrome font that can make URLs easily mistakable, even if users were paying attention to them. Worse even, it’s the untrusted space that we’re telling users to conduct the trusted operations of authentication and credit card transactions – the untrusted website portion of the web browser!.

Our browsers are so awful today that the very best advice we can offer everyday people is to try and memorize all the domains their bank uses, and get a pair of glasses to look at the address bar. We’re teaching users to perform trusted transactions in a piece of software that has no clear demarcation of trust.

The authentication systems we use these days were designed to be able to conduct secure transactions with anyone online, not knowing who they are, but most users today know exactly who they’re doing business with; they do business with the same organizations over and over; yet to the average user, a URL or an SSL certificate with a slightly different name or fingerprint means nothing. The average user relies on the one thing we have no control over: What the content looks like.

I propose we flip this on its head.

Protecting Your Data at a Border Crossing

On February 9, 2017 by Jonathan ZdziarskiWith the current US administration pondering the possibility of forcing foreign travelers to give up their social media passwords at the border, a lot of recent and justifiable concern has been raised about data privacy. The first mistake you could make is presuming that such a policy won’t affect US citizens. For decades, JTTFs (Joint Terrorism Task Forces) have engaged in intelligence sharing around the world, allowing foreign governments to spy on you on behalf of your home country, passing that information along through various databases. What few protections citizens have in their home countries end at the border, and when an ally spies on you, that data is usually fair game to share back to your home country. Think of it as a backdoor built into your constitutional rights. To underscore the significance of this, consider that the president signed an executive order just today stepping up efforts at fighting international crime, which will likely result in the strengthening of resources to a JTTFs to expand this practice of “spying on my brother’s brother for him”. With this, the president also counted the most common crimes – drugs, gangs, racketeering, etc – as matters of “national security”.

Once policies that require surrendering passwords (I’ll call them password policies from now on) are adopted, the obvious intelligence benefit will no doubt inspire other countries to establish reciprocity in order to leverage receiving better intelligence about their own citizens traveling abroad. It’s likely the US will inspire many countries, including oppressive nations, to institute the same password policies at the border. This will ultimately be used to skirt search and seizure laws by opening up your data to forensic collection. In other words, you don’t need Microsoft to service a warrant, nor will the soil your data sits on matter, because it will be a border agent connecting directly your account with special software throug the front door.

I am not a lawyer, and I can’t provide you with legal advice about your rights, or what you can do at a border crossing to protect yourself legally, but I can explain the technical implications of this, as well as provide some steps you can take to protect your data regardless of what country you’re entering. Disclaimer: You accept full responsibility and liability for taking any of this information and using it.

Slides: Crafting macOS Root Kits

On February 2, 2017 by Jonathan ZdziarskiHere are the slides from my talk at Dartmouth College this week; this was a basic introduction / overview of the macOS kernel and how root kits often have fun with the kernel. There’s not much new here, but the deck might be a good introduction for anyone looking to get into develop security tools

Resolving Kernel Symbols Post-ASLR

On February 2, 2017 by Jonathan ZdziarskiThere are some 21,000 symbols in the macOS kernel, but all but around 3,500 are opaque even to kernel developers. The reasoning behind this was likely twofold: first, Apple is continually making changes and improvements in the kernel, and they probably don’t want kernel developers mucking around with unstable portions of the code. Secondly, kernel dev used to be the wild wild west, especially before you needed a special code signing cert to load a kext, and there were a lot of bad devs who wrote awful code making macOS completely unstable. Customers running such software probably blamed Apple for it, instead of the developer. Apple now has tighter control over who can write kernel code, but it doesn’t mean developers have gotten any better at it. Looking at some commercial products out there, there’s unsurprisingly still terrible code to do things in the kernel that should never be done.

So most of the kernel is opaque to kernel developers for good reason, and this has reduced the amount of rope they have to hang themselves with. For some doing really advanced work though (especially in security), the kernel can sometimes feel like a Fisher Price steering wheel because of this, and so many have found ways around privatized functions by resolving these symbols and using them anyway. After all, if you’re going to combat root kits, you have to act like a root kit in many ways, and if you’re going to combat ransomware, you have to dig your claws into many of the routines that ransomware would use – some of which are privatized.

Today, there are many awful implementations of both malware and anti-malware code out there that resolve these private kernel symbols. Many of them do idiotic things like open and read the kernel from a file, scan memory looking for magic headers, and other very non-portable techniques that risk destabilizing macOS even more. So I thought I’d take a look at one of the good examples that particularly stood out to me. Some years back, Nemo and Snare wrote some good in-memory symbol resolving code that walked the LC_SYMTAB without having to read the kernel from disk, scan memory, or do any other disgusting things, and did it in a portable way that worked on whatever new versions of macOS came out.

Technical Analysis: Meitu is Junkware, but not Malicious

On January 26, 2017 by Jonathan ZdziarskiLast week, I live tweeted some reverse engineering of the Meitu iOS app, after it got a lot of attention on Android for some awful things, like scraping the IMEI of the phone. To summarize my own findings, the iOS version of Meitu is, in my opinion, one of thousands of types of crapware that you’ll find on any mobile platform, but does not appear to be malicious. In this context, I looked for exfiltration or destruction of personal data to be a key indicator of malicious behavior, as well as performing any kind of unauthorized code execution on the device or performing nefarious tasks… but Meitu does not appear to go beyond basic advertiser tracking. The application comes with several ad trackers and data mining packages compiled into it – which appear to be primarily responsible for the app’s suspicious behavior. While it’s unusually overloaded with tracking software, it also doesn’t seem to be performing any kind of exfiltration of personal data, with some possible exceptions to location tracking. One of the reasons the iOS app is likely less disgusting than the Android app is because it can’t get away with most of that kind of behavior on the iOS platform.

Configuring the Touch Bar for System Lockdown

On January 17, 2017 by Jonathan ZdziarskiThe new Touch Bar is often marketed as a gimmick, but one powerful capability it has is to function as a lockdown mechanism for your machine in the event of a physical breach. By changing a few power management settings and customizing the Touch Bar, you can add a button that will instantly lock the machine’s screen and then begin a countdown (that’s configurable, e.g. 5 minutes) to lock down the entire system, which will disable the fingerprint reader, remove power to the RAM, and discard your FileVault keys, effectively locking the encryption, protecting you from cold boot attacks, and prevent the system from being unlocked by a fingerprint.

One of the reasons you may want to do this is to allow the system to remain live while you step away, answer the door, or run to the bathroom, but in the event that you don’t come back within a few minutes, lock things down. It can be ideal for the office, hotels, or anywhere you feel that you feel your system may become physically compromised. This technique offers the convenience of being able to unlock the system with your fingerprint if you come back quickly, but the safety of having the system secure itself if you don’t.

Backdoor: A Technical Definition

On January 13, 2017 by Jonathan ZdziarskiOriginal Date: April, 2016

A clear technical definition of the term backdoor has never reached wide consensus in the computing community. In this paper, I present a three-prong test to determine if a mechanism is a backdoor: “intent”, “consent”, and “access”; all three tests must be satisfied in order for a mechanism to meet the definition of a backdoor. This three-prong test may be applied to software, firmware, and even hardware mechanisms in any computing environment that establish a security boundary, either explicitly or implicitly. These tests, as I will explain, take more complex issues such as disclosure and authorization into account.

The technical definition I present is rigid enough to identify the taxonomy that backdoors share in common, but is also flexible enough to allow for valid arguments and discussion.

On NCCIC/FBI Joint Report JAR-16-20296

On January 6, 2017 by Jonathan ZdziarskiSocial media is ripe with analysis of an FBI joint report on Russian malicious cyber activity, and whether or not it provides sufficient evidence to tie Russia to election hacking. What most people are missing is that the JAR was not intended as a presentation of evidence, but rather a statement about the Russian compromises, followed by a detailed scavenger hunt for administrators to identify the possibility of a compromise on their systems. The data included indicators of compromise, not the evidentiary artifacts that tie Russia to the DNC hack.

One thing that’s been made clear by recent statements by James Clapper and Admiral Rogers is that they don’t know how deep inside American computing infrastructure Russia has been able to get a foothold. Rogers cited his biggest fear as the possibility of Russian interference by injection of false data into existing computer systems. Imagine the financial systems that drive the stock market, criminal databases, driver’s license databases, and other infrastructure being subject to malicious records injection (or deletion) by a nation state. The FBI is clearly scared that Russia has penetrated more systems than we know about, and has put out pages of information to help admins go on the equivalent of a bug bounty.

San Bernardino: Behind the Scenes

On November 2, 2016 by Jonathan ZdziarskiI wasn’t originally going to dig into some of the ugly details about San Bernardino, but with FBI Director Comey’s latest actions to publicly embarrass Hillary Clinton (who I don’t support), or to possibly tip the election towards Donald Trump (who I also don’t support), I am getting to learn more about James Comey and from what I’ve learned, a pattern of pushing a private agenda seems to be emerging. This is relevant because the San Bernardino iPhone matter saw numerous accusations of pushing a private agenda by Comey as well; that it was a power grab for the bureau and an attempt to get a court precedent to force private business to backdoor encryption, while lying to the public and possibly misleading the courts under the guise of terrorism.

On the State of Open Source

On October 3, 2016 by Jonathan Zdziarski I was just a teenager when I got involved in the open source community. I remember talking with an old bearded guy once about how this new organization, GNU, is going to change everything. Over the years, I mucked around with a number of different OSS tools and operating systems, got excited when symmetric multiprocessing came to BSD, screwed around with Linux boot and root disks, and had become both engaged and enthralled with the new community that had developed around Unix over the years. That same spirit was simultaneously shared outside of the Unix world, too. Apple user groups met frequently to share new programs we were working on with our ][c’s, and later our ][gs’s and Macs, exchange new shareware (which we actually paid for, because the authors deserved it), and to buy stacks of floppies of the latest fonts or system disks. We often demoed our new inventions, shared and exchanged the source code to our BBS systems, games, or anything else we were working on, and made the agendas of our user groups community efforts to teach and understand the awful protocols, APIs, and compilers we had at the time. This was my first experience with open source. Maybe it was not yours, although I hope yours was just as positive.

I was just a teenager when I got involved in the open source community. I remember talking with an old bearded guy once about how this new organization, GNU, is going to change everything. Over the years, I mucked around with a number of different OSS tools and operating systems, got excited when symmetric multiprocessing came to BSD, screwed around with Linux boot and root disks, and had become both engaged and enthralled with the new community that had developed around Unix over the years. That same spirit was simultaneously shared outside of the Unix world, too. Apple user groups met frequently to share new programs we were working on with our ][c’s, and later our ][gs’s and Macs, exchange new shareware (which we actually paid for, because the authors deserved it), and to buy stacks of floppies of the latest fonts or system disks. We often demoed our new inventions, shared and exchanged the source code to our BBS systems, games, or anything else we were working on, and made the agendas of our user groups community efforts to teach and understand the awful protocols, APIs, and compilers we had at the time. This was my first experience with open source. Maybe it was not yours, although I hope yours was just as positive.

It wasn’t open source that people were excited about, and we didn’t really even call it open source at first. It was computer science in general. Computer science was a brand new world of discovery for many of us, and open source was merely the bi-product of natural curiosity and the desire to share knowledge and collaborate. You could call it hacking, but at the time we didn’t know what the hell we were doing, or what to call it. The environment, at the time, was positive, open, and supportive; words that, unfortunately, you probably wouldn’t associate with open source today. You could split hairs and call this the “computing” or “hacking” community, but at the time all of these things were intertwined, and you couldn’t tease them apart without destroying them all: perhaps that’s what went wrong, eventually we did.

WhatsApp Forensic Artifacts: Chats Aren’t Being Deleted

On July 28, 2016 by Jonathan ZdziarskiSorry, folks, while experts are saying the encryption checks out in WhatsApp, it looks like the latest version of the app tested leaves forensic trace of all of your chats, even after you’ve deleted, cleared, or archived them… even if you “Clear All Chats”. In fact, the only way to get rid of them appears to be to delete the app entirely.

To test, I installed the app and started a few different threads. I then archived some, cleared, some, and deleted some threads. I made a second backup after running the “Clear All Chats” function in WhatsApp. None of these deletion or archival options made any difference in how deleted records were preserved. In all cases, the deleted SQLite records remained intact in the database.

Just to be clear, WhatsApp is deleting the record (they don’t appear to be trying to intentionally preserve data), however the record itself is not being purged or erased from the database, leaving a forensic artifact that can be recovered and reconstructed back into its original form.

WSJ Describes Reckless Behavior by FBI in Terrorism Case

On April 26, 2016 by Jonathan ZdziarskiThe Wall Street Journal published an article today citing a source at the FBI is planning to tell the White House that “it knows so little about the hacking tool that was used to open terrorist’s iPhone that it doesn’t make sense to launch an internal government review”. If true, this should be taken as an act of recklessness by the FBI with regards to the Syed Farook case: The FBI apparently allowed an undocumented tool to run on a piece of high profile, terrorism-related evidence without having adequate knowledge of the specific function or the forensic soundness of the tool.

Best practices in forensic science would dictate that any type of forensics instrument needs to be tested and validated. It must be accepted as forensically sound before it can be put to live evidence. Such a tool must yield predictable, repeatable results and an examiner must be able to explain its process in a court of law. Our court system expects this, and allows for tools (and examiners) to face numerous challenges based on the credibility of the tool, which can only be determined by a rigorous analysis. The FBI’s admission that they have such little knowledge about how the tool works is an admission of failure to evaluate the science behind the tool; it’s core functionality to have been evaluated in any meaningful way. Knowing how the tool managed to get into the device should be the bare minimum I would expect anyone to know before shelling out over a million dollars for a solution, especially one that was going to be used on high-profile evidence.

A tool should not make changes to a device, and any changes should be documented and repeatable. There are several other variables to consider in such an effort, especially when imaging an iOS device. Apart from changes made directly by the tool (such as overwriting unallocated space, or portions of the file system journal), simply unlocking the device can cause the operating system to make a number of changes, start background tasks which could lead to destruction of data, or cause other changes unintentionally. Without knowing how the tool works, or what portions of the operating system it affects, what vulnerabilities are exploited, what the payload looks like, where the payload is written, what parts of the operating system are disabled by the tool, or a host of other important things – there is no way to effectively measure whether or not the tool is forensically sound. Simply running it against a dozen other devices to “see if it works” is not sufficient to evaluate a forensics tool – especially one that originated from a grey hat hacking group, potentially with very little actual in-house forensics expertise.

Hardware-Entangled APIs and Sessions in iOS

On April 26, 2016 by Jonathan ZdziarskiApple has long enjoyed a security architecture whose security, in part, rests on the entanglement of their encryption to a device’s physical hardware. This pairing has demonstrated to be highly effective at thwarting a number of different types of attacks, allowing for mobile payments processing, secure encryption, and a host of other secure services running on an iPhone. One security feature that iOS lacks for third party developers is the ability to validate the hardware a user is on, preventing third party applications from taking advantage of such a great mechanism. APIs can be easily spoofed, as a result, and sessions and services are often susceptible to a number of different forms of abuse. Hardware validation can be particularly important when dealing with crowd-sourced data and APIs, as was the case a couple years ago when a group of students hacked Waze’s traffic intelligence. These types of Sybil attacks allow for thousands of phantom users to be created off of one single instance of an application, or even spoof an API altogether without a connection to the hardware. Other types of MiTM attacks are also a threat to applications running under iOS, for example by stealing session keys or OAuth tokens to access a user’s account from a different device or API. What can Apple do to thwart these types of attacks? Hardware entanglement through the Secure Enclave.

Open Letter to Congress on Encryption Backdoors

On April 20, 2016 by Jonathan ZdziarskiTo the Honorable Congress of the United States of America,

I am a proud American who has had the pleasure of working with the law enforcement community for the past eight years. As an independent researcher, I have assisted on numerous local, state, and federal cases and trained many of our federal and military agencies in digital forensics (including breaking numerous encryption implementations). Early on, there was a time when my skill set was exclusively unique, and I provided assistance at no charge to many agencies flying agents out to my small town for help, or meeting with detectives while on vacation. I have developed an enormous respect for the people keeping our country safe, and continue to help anyone who asks in any way that I can.

With that said, I have seen a dramatic shift in the core competency of law enforcement over the past several years. While there are many incredibly bright detectives and agents working to protect us, I have also seen an uncomfortable number who have regressed to a state of “push button forensics”, often referred to in law enforcement circles as “push and drool forensics”; that is, rather than using the skills they were trained with to investigate and solve cases, many have developed an unhealthy dependence on forensics tools, which have the ability to produce the “smoking gun” for them, literally with the touch of a button. As a result, I have seen many open-and-shut cases that have had only the most abbreviated of investigations, where much of the evidence was largely ignored for the sake of these “smoking guns” – including much of the evidence on the mobile device, which often times conflicted with the core evidence used.