Apple’s Authentication Scheme and “Backdoors” Discussion

On July 31, 2014 by Jonathan ZdziarskiI’ve heard a number of people make an argument about Apple’s authentication front-ending the services I’ve described in my paper, including the “file relay” service, which has opened up a discussion about the technical definition of a backdoor. The primary concern I’m hearing, including from Apple, is that the user has to authenticate before having access to this service, which one would normally expect would preclude a service from being a backdoor by some (but not all) definitions. This is a valid point, and in fact I acknowledge this thoroughly in my paper. Let me explain, however, why this argument about authentication is more complicated and subtle than it seems.

Most authentication schemes are encapsulated from weakest to strongest, and are also isolated from one another; certain credentials get you into certain systems, but not into others. You may have a separate password for Twitter, Facebook, or other accounts, and they only interoperate if you’re using a single sign-on mechanism (for example, OAuth) to use that same set of credentials on other sites. If one gets stolen, then, only the services that are associated with those credentials can be accessed. Those authentication mechanisms are often protected with even stronger authentication systems. For example, your password might be stored on Apple’s keychain, which is protected with an encryption that is tied directly to your desktop password. Your entire disk might also be encrypted using full disk encryption, which protects the keychain (and all of your other data) with yet another (usually stronger) password. So you end up with a hierarchy of authentication mechanisms that get protected by stronger authentication mechanisms, and sometimes even stronger ones on top of that. Apple’s authentication scheme for iOS, however, is the opposite of this, where the strongest forms of authentication are protected by the weakest – creating a significant security problem in their design. The way Apple has designed the iOS authentication scheme is that the weakest forms of authentication have complete control to bypass the stronger forms of authentication. This allows services like file relay, which bypasses backup encryption, to be accessed with the weakest authentication mechanisms (PIN or pair record), when end-users are relying on the stronger “backup encryption password” to protect them.

Oxygen Forensics: Latest Forensics Tool to Exploit Apple’s “Diagnostic Service” to Bypass Encryption

On July 31, 2014 by Jonathan ZdziarskiWhile Apple’s claims may be that a key subject of my talk, “Identifying Backdoors, Attack Points, and Surveillance Mechanisms in iOS Devices” (com.apple.mobile.file_relay) is for diagnostics, a recent announcement from the makers of the fantastic Oxygen Forensics suite shows strong evidence that law enforcement forensics is continuing to take every legal technical option available to

Roundup of iOS Backdoor (AKA “Diagnostic Service”) Related Tech Articles

On July 28, 2014 by Jonathan ZdziarskiThere are a lot of terrible news articles out there, and a lot of terrible “journalists” who have either over-hyped my research, or dismissed it entirely. After ZDNet’s utterly horrible diatribe about my research, I posted a proof-of-concept to help further clarify that was and wasn’t possible. Unfortunately, the FUD has continued, and so I

Dispelling Confusion and Myths: iOS Proof-of-Concept

On July 25, 2014 by Jonathan ZdziarskiHere’s my iOS Backdoor Proof-of-Concept:

http://youtu.be/z5ymf0UsEuw

When I originally gave my talk, it was to a small room of hackers at a hacker conference with a strong privacy theme. With two hours of content to fit into 45 minutes, I not only had no time to demo a POC, but felt that demonstrating a POC of the personal data you could extract from a locked iOS device might be construed as attempting to embarrass Apple or to be sensationalist. After the talk, I did ask a number of people that I know attended if they felt I was making any accusations or outrageous statements, and they told me no, that I presented the information and left it to the audience to draw conclusions. They also mentioned that I was very careful with my wording, so as not to attempt to alarm people. The paper itself was published in a reputable forensics journal, and was peer-reviewed, edited, and accepted as an academic paper. Both my paper and presentation made some very important security and privacy concerns known, and the last thing I wanted to do was to fuel the fire for conspiracy theorists who would interpret my talk as an accusation that Apple is working with NSA. The fact is, I’ve never said Apple was conspiring secretly with any government agency – that’s what some journalists have concluded, and with no evidence mind you. Apple might be, sure, but then again they also might not be. What I do know is that there are a number of laws requiring compliance with customer data, and that Apple has a very clearly defined public law enforcement process for extracting much the same data off of passcode-locked iPhones as the mechanisms I’ve discussed do. In this context, what I deem backdoors (which Apple claims are for their own use), attack points, and so on become – yes suspicious – but more importantly abuse-prone, and can and have been used by government agencies to acquire data from devices that they otherwise wouldn’t be able to access with forensics software. As this deals with our private data, this should all be very open to public scrutiny – but some of these mechanisms had never been disclosed by Apple until after my talk.

Apple Confirms “Backdoors”; Downplays Their Severity

On July 23, 2014 by Jonathan ZdziarskiApple responded to allegations of hidden services running on iOS devices with this knowledge base article. In it, they outlined three of the big services that I outlined in my talk. So again, Apple has, in a traditional sense, admitted to having backdoors on the device specifically for their own use.

A backdoor simply means that it’s an undisclosed mechanism that bypasses some of the front end security to make access easier for whoever it was designed for (OWASP has a great presentation on backdoors, where they are defined like this). It’s an engineering term, not a Hollywood term. In the case of file relay (the biggest undisclosed service I’ve been barking about), backup encryption is being bypassed, as well as basic file system and sandbox permissions, and a separate interface is there to simply copy a number of different classes of files off the device upon request; something that iTunes (and end users) never even touch. In other words, this is completely separate from the normal interfaces on the device that end users talk to through iTunes or even Xcode. Some of the data Apple can get is data the user can’t even get off the device, such as the user’s photo album that’s synced from a desktop, screenshots of the user’s activity, geolocation data, and other privileged personal information that the device even protects from its own users from accessing. This weakens privacy by completely bypassing the end user backup encryption that consumers rely on to protect their data, and also gives the customer a false sense of security, believing their personal data is going to be encrypted if it ever comes off the device.

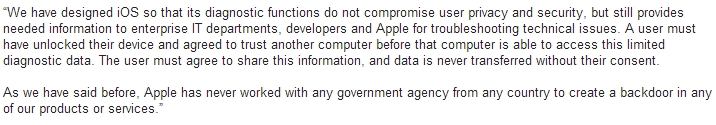

Apple Responds

On July 21, 2014 by Jonathan ZdziarskiIn a response from Apple PR to journalists about my HOPE/X talk, it looks like Apple might have inadvertently admitted that, in the most widely accepted sense of the word, they do indeed have backdoors in iOS, however claim that the purpose is for “diagnostics” and “enterprise”.

The problem with this is that these services dish out data (and bypass backup encryption) regardless of whether or not “Send Diagnostic Data to Apple” is turned on or off, and whether or not the device is managed by an enterprise policy of any kind. So if these services were intended for such purposes, you’d think they’d only work if the device was managed/supervised or if the user had enabled diagnostic mode. Unfortunately this isn’t the case and there is no way to disable these mechanisms. As a result, every single device has these features enabled and there’s no way to turn them off, nor are users prompted for consent to send this kind of personal data off the device.

Slides from my HOPE/X Talk

On July 18, 2014 by Jonathan ZdziarskiIdentifying Backdoors, Attack Points, and Surveillance Mechanisms in iOS Devices In addition to the slides, you may be interested in the journal paper published in the International Journal of Digital Forensics and Incident Response. Please note: they charge a small fee for all copies of their journal papers; I don’t actually make anything off of