Apple Confirms “Backdoors”; Downplays Their Severity

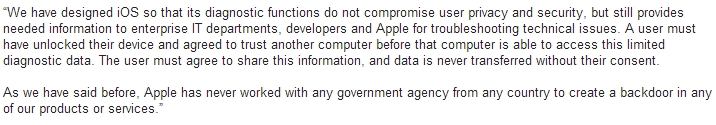

On July 23, 2014 by Jonathan ZdziarskiApple responded to allegations of hidden services running on iOS devices with this knowledge base article. In it, they outlined three of the big services that I outlined in my talk. So again, Apple has, in a traditional sense, admitted to having backdoors on the device specifically for their own use.

A backdoor simply means that it’s an undisclosed mechanism that bypasses some of the front end security to make access easier for whoever it was designed for (OWASP has a great presentation on backdoors, where they are defined like this). It’s an engineering term, not a Hollywood term. In the case of file relay (the biggest undisclosed service I’ve been barking about), backup encryption is being bypassed, as well as basic file system and sandbox permissions, and a separate interface is there to simply copy a number of different classes of files off the device upon request; something that iTunes (and end users) never even touch. In other words, this is completely separate from the normal interfaces on the device that end users talk to through iTunes or even Xcode. Some of the data Apple can get is data the user can’t even get off the device, such as the user’s photo album that’s synced from a desktop, screenshots of the user’s activity, geolocation data, and other privileged personal information that the device even protects from its own users from accessing. This weakens privacy by completely bypassing the end user backup encryption that consumers rely on to protect their data, and also gives the customer a false sense of security, believing their personal data is going to be encrypted if it ever comes off the device.

Apple Responds

On July 21, 2014 by Jonathan ZdziarskiIn a response from Apple PR to journalists about my HOPE/X talk, it looks like Apple might have inadvertently admitted that, in the most widely accepted sense of the word, they do indeed have backdoors in iOS, however claim that the purpose is for “diagnostics” and “enterprise”.

The problem with this is that these services dish out data (and bypass backup encryption) regardless of whether or not “Send Diagnostic Data to Apple” is turned on or off, and whether or not the device is managed by an enterprise policy of any kind. So if these services were intended for such purposes, you’d think they’d only work if the device was managed/supervised or if the user had enabled diagnostic mode. Unfortunately this isn’t the case and there is no way to disable these mechanisms. As a result, every single device has these features enabled and there’s no way to turn them off, nor are users prompted for consent to send this kind of personal data off the device.

Slides from my HOPE/X Talk

On July 18, 2014 by Jonathan ZdziarskiIdentifying Backdoors, Attack Points, and Surveillance Mechanisms in iOS Devices In addition to the slides, you may be interested in the journal paper published in the International Journal of Digital Forensics and Incident Response. Please note: they charge a small fee for all copies of their journal papers; I don’t actually make anything off of

My Talk at HOPE X

On June 9, 2014 by Jonathan ZdziarskiI’ll be speaking at the HOPE (Hackers on Planet Earth) big X conference this year, July 18-20 in NYC. Below is a summary of my talk. It’s based on this published journal paper. Hope to see you there! Identifying Back Doors, Attack Points, and Surveillance Mechanisms in iOS Devices The iOS operating system has long

The Importance of Forensic Tools Validation

On March 28, 2014 by Jonathan ZdziarskiI recently finished consulting on a rather high profile case, and once again found myself spending almost as much time correcting reports from third party forensic tools vendors as I did analyzing actual evidence. It’s even sadder that I charged less for my services than these tools manufacturers charge for a single license of their buggy software. I don’t say high profile to sound important, I say it because these types of cases are generally of great importance themselves, and you absolutely need the evidence to be accurate. Many in the law enforcement community have learned to “trust the tools”, citing scientific method and all that. The problem I’ve found throughout my entire career in forensics, however, has shown me quite the opposite. When it comes to forensic software, the judge should not automatically trust the forensic tools as part of the scientific process, and neither should the forensic examiners using them. Let me explain why…

In forensics, we often misplace our trust in tools that, unlike tried and true scientific methods, are usually closed source. While true scientific process relies on making our findings repeatable and verifiable, the methods to analyze data are sometimes patented, and almost always considered trade secrets. This is the complete opposite of the scientific method, where methods are fully explained and documented. In the software industry, repeatable is exactly what you don’t want your methods to be – especially by your competitors. The nature of secrecy in the software industry doesn’t rub well against the open scientific nature that you’d expect to find in forensic, or other scientific disciplines.As such, “software” is not scientific in nature, and should not be trusted using the same rules as science. Sure, we have some validation experts out there. NIST does a good job of validating logical data acquired from a number of devices and has struck some good and interesting results that have helped the industry. Even still, such tests are only a single data point on an ever evolving software manufacturing process riddled with regression bugs and programming errors that only show up in certain specific data sets.

Journal Paper Published

On March 12, 2014 by Jonathan ZdziarskiThe International Journal of Digital Forensics and Incident Response has formally accepted and published my paper titled Identifying Back Doors, Attack Points, and Surveillance Mechanisms in iOS Devices. This paper is a compendium of services and mechanisms used by many law enforcement agencies and in open source, of modern forensic techniques to create a forensic

Forensics Tools: Stop Miscalculating iOS Usage Analytics!

On January 22, 2014 by Jonathan ZdziarskiThere are some great forensics tools out there… and also some really crummy ones. I’ve found an incredible amount of wrong information in the often 500+ page reports some of these tools crank out, and often times the accuracy of the data is critical to one of the cases I’m assisting with at the time. Tools validation is critical to the healthy development cycle of a forensics tool, and unfortunately many companies don’t do enough of it. If investigators aren’t doing their homework to validate the information (and subsequently provide feedback to the software manufacturer), the consequences could mean an innocent person goes to jail, or a guilty one goes free. No joke. This could have happened a number of times had I not caught certain details.

Today’s reporting fail is with regards to the application “usage” information stored in iOS in the ADDataStore.sqlitedb file. At least a couple forensics tools are misreporting this data so as to be up to 26 hours or more off.

Fingerprint Reader / PIN Bypass for Enterprises Built Into iOS 7

On September 18, 2013 by Jonathan ZdziarskiWith iOS 7 and the new 5s come a few new security mechanisms, including a snazzy fingerprint reader and a built-in “trust” mechanism to help prevent juice jacking. Most people aren’t aware, however, that with so much new consumer security also come new bypasses in order to give enterprises access to corporate devices. These are in your phone’s firmware, whether it’s company owned or not, and their security mechanisms are likely also within the reach of others, such as government agencies or malicious hackers. One particular bypass appears to bypass both the passcode lock screen as well as the fingerprint locking mechanism, to grant enterprises access to their devices while locked. But at what cost to the overall security of consumer devices?

Injecting Reveal With MobileSubstrate

On June 3, 2013 by Jonathan ZdziarskiReveal is a cool prototyping tool allowing you to perform runtime inspection of an iOS application. At the moment, its functionality revolved primarily around user interface design, allowing you to manage user interface objects and their behavior. It is my hope that in the future, Reveal will expand to be a full featured debugging tool, allowing pen-testers to inspect and modify instance variables in memory, instantiate new objects, invoke methods, and generally hack on the runtime of an iOS application. At the moment, it’s still a pretty cool user interface design aid. Reveal is designed to be linked with your project, meaning you have to have the source code of the application you want to inspect. This is a quick little instructional on how to link the reveal framework with any existing application on your iOS device, so that you can inspect it without source.

How Juice Jacking Works, and Why It’s a Threat

On June 3, 2013 by Jonathan ZdziarskiHow ironic that only a week or two after writing an article about pair locking, we would see this talk coming out of Black Hat 2013, demonstrating how juice jacking can be used to install malicious software. The talk is getting a lot of buzz with the media, but many security guys like myself are scratching our heads wondering why this is being considered “new” news. Granted, I can only make statements based on the abstract of the talk, but all signs seem to point to this as a regurgitation of the same type of juice jacking talks we saw at DefCon two years ago. Nevertheless, juice jacking is not only technically possible, but has been performed in the wild for a few years now. I have my own juice jacking rig, which I use for security research, and I have also retrofitted my iPad Mini with a custom forensics toolkit, capable of performing a number of similar attacks against iOS devices. Juice jacking may not be anything new, but it is definitely a serious consideration for potential high profile targets, as well as for those serious about data privacy.

Free Download: iOS Forensic Investigative Methods

On May 6, 2013 by Jonathan ZdziarskiGiven the vast amount of loose knowledge now out there in the community, and the increasing number of commercial tools available to conduct both law enforcement and private sector acquisition of an iOS device, I’ve decided to make my law enforcement guide, “iOS Forensic Investigative Methods” freely available to all. The manual contains a lot

iOS Counter Forensics: Encrypting SMS and Other Crypto-Hardening

On May 2, 2013 by Jonathan ZdziarskiAs I explained in a recent blog post, your iOS device isn’t as encrypted as you think. In fact, nearly everything except for your email database and keychain can (and often is) recovered by Apple under subpoena (your device is either sent to or flown to Cupertino, and a copy of its hard drive contents are provided to law enforcement). Depending on your device model, a number of existing forensic imaging tools can also be used to scrape data off of your device, some of which are pirated in the wild. Lastly, a number of jailbreak and hacking tools, and private exploits can get access to your device even if it is protected with a passcode or a PIN. The only thing protecting your data on the device is the tiny sliver of encryption that is applied to your email database and keychain. This encryption (unlike the encryption used on the rest of the file system) makes use of your PIN or passphrase to encrypt the data in such a way that it is not accessible until you first enter it upon a reboot. Because nearly every working forensics or hacking tool for iOS 6 requires a reboot in order to hijack the phone, your email database file and keychain are “reasonably” secure using this better form of encryption.

While I’ve made remarks in the past that Apple should incorporate a complex boot passphrase into their iOS full disk encryption, like they do with File Vault, it’s fallen on deaf ears, and we will just have to wait for Apple to add real security into their products. It’s also beyond me why Apple decided that your email was the only information a consumer should find “valuable” enough to encrypt. Well, since Apple doesn’t have your security in mind, I do… and I’ve put together something you can do to protect the remaining files on your device. This technique will let you turn on the same type of encryption used on your email index for other files on the device. The bad news is that you have to jailbreak your phone to do it, which reduces the overall security of the device. The good news is that the trade-off might be worth it. When you jailbreak, not only can unsigned code run on the device, but App Store applications running inside the sandbox will have access to much more personal data they previously didn’t have access to, due to certain sandbox patches that are required in order to make the jailbreak work. This makes me feel uneasy, given the amount of spyware that’s already been found in the App Store… so you’ll need to be careful what you install if you’re going to jailbreak. The upside is that , by protecting other files on your device with Data-Protection encryption, forensic recovery will be highly unlikely without knowledge of (or brute forcing) your passphrase. Files protected this way are married to your passphrase, and so even with physical possession of your device, it’s unlikely they’d be recoverable.

Introduction to iOS Binary Patching (Part 1)

On April 28, 2013 by Jonathan ZdziarskiPart of my job as a forensic scientist is to hack applications. When working some high profile cases, it’s not always that simple to extract data right off of the file system; this is especially true if the data is encrypted or obfuscated in some way. In such cases, it’s sometimes easier to clone the file system of a device and perform what some would call “forensic hacking”; there are often many flaws within an application that can be exploited to convince the application to unroll its own data. We also perform a number of red-team pen-tests for financial/banking, government, and other customers working with sensitive data, where we (under contract) attack the application (and sometimes the servers) in an attempt to test the system’s overall security. More often than not, we find serious vulnerabilities in the applications we test. In the time I’ve spent doing this, I’ve seen a number of applications whose encryption implementations have been riddled with holes, allowing me to attack the implementation rather than the encryption itself (which is much harder).

There are a number of different ways to manipulate an iOS application. I wrote about some of them in my last book, Hacking and Securing iOS Applications . The most popular (and expedient) method involves using tools such as Cycript or a debugger to manipulate the Objective-C runtime, which I demonstrated in my talk at Black Hat 2012 (slides). This is very easy to do, as the entire runtime funnels through only a handful of runtime C functions. It’s quite simple to hijack an application’s program flow, create your own objects, or invoke methods within an application. Often times, tinkering with the runtime is more than enough to get what you want out of an application. The worst example of security I demonstrated in my book was one application that simply decrypted and loaded all of its data with a single call to an application’s login function, [ OneSafeAppDelegate userIsLogged: ]. Manipulating the runtime will only get you so far, though. Tools like Cycript only work well at a method level. If you’re trying to override some logic inside of a method, you’ll need to resort to a debugger. Debugging an application gives you more control, but is also an interactive process; you’ll need to repeat your process every time you want to manipulate the application (or write some fancy scripts to do it). Developers are also getting a little trickier today in implementing jailbreak detection and counter-debugging techniques, meaning you’ll have to fight through some additional layers just to get into the application.

This is where binary patching comes in handy. One of the benefits to binary patching is that the changes to the application logic can be made permanent within the binary. By changing the program code itself, you’re effectively rewriting the application. It also lets you get down to a machine instruction level and manipulate registers, arguments, comparison operations, and other granular logic. Binary patching has been used historically to break applications’ anti-piracy mechanisms, but is also quite useful in the fields of forensic research as well as penetration testing. If I can find a way to patch an application to give me access to certain evidence that it wouldn’t before, then I can copy that binary back to the original device (if necessary) to extract a copy of the evidence for a case, or provide the investigator with a device that has a permanently modified version of the application they can use for a specific purpose. For our pen-testing clients, I can provide a copy of their own modified binary, accompanied by a report demonstrating how their application was compromised, and how they can strengthen the security for what will hopefully be a more solid production release.

Your iOS device isn’t as encrypted as you think

On April 18, 2013 by Jonathan ZdziarskiThis should help clear up the common misconception that data is encrypted and secured in iOS. While it’s true that iOS does sport an encrypted file system, that file system is virtually always unlocked from the moment the operating system boots up, as the OS (and your applications) need access to it. Even when the device is locked with your PIN or passphrase, the encrypted file system is readable to the operating system – what this means is that your data is NOT encrypted using an encryption that depends on your password – at least for the most part. Apple adds a second layer of encryption on top of this file system called Data-Protection. Apple’s Data-Protection encryption has the ability to protect a file while the device is locked by encrypting it with a key that is only available when you’ve entered your PIN or passphrase. While a PIN can be brute forced, a passphrase is much stronger.

So what’s the problem? Well, as of even the latest versions of iOS, the only files protected with this secondary encryption is your mail index, the keychain itself, and third party application files specifically tagged (by the developer) as protected with Data-Protection. Virtually everything else (your contacts, SMS, spotlight cache, photos, and so on) remain unprotected. To demonstrate this, I’ve put together a small recipe you can run on your own jailbroken device to bypass the lock screen. You can then use the GUI to browse through all of the data on the device, without ever providing your PIN. The only thing you’ll not be able to access are the files I’ve just mentioned. This lock screen bypass isn’t really a vulnerability in and of itself; it’s just one of many ways I can demonstrate to you that you don’t need a passphrase to view a vast majority if the data on your phone.

The Dark Art of iOS Application Hacking

On May 27, 2012 by Jonathan ZdziarskiI’ll be giving the talk, The Dark Art of iOS Application Hacking at Black Hat 2012 in Las Vegas this July. This workshop will cover many techniques we use to attack iOS applications, and has numerous applications in the security and government fields; everything from pen-testing to forensic hacking and surveillance for national security related

National Institute of Justice Validates Zdziarski Method

On June 2, 2011 by Jonathan ZdziarskiRick Ayers at NIST has validated the iPhone forensics tools law enforcement have been using for a few years now. This is quite an honor, not only to know that the tools are considered sound by a government standards entity, but also that this research has been important enough to the community for it to

Al Capone’s Original Thompson Machine Gun

On May 4, 2010 by Jonathan ZdziarskiJust when I thought my trip to Chicago would be average, some of the sergeants at the Chicago Police Training Academy, whom I’m training in iPhone forensic investigative methods, took me to the firing range in the basement and brought out an old dusty case. What came out of that case was an amazing piece

Bypassing iPhone 3G[s] Encryption

On July 24, 2009 by Jonathan ZdziarskiBypassing Passcode and Backup Encryption: http://www.youtube.com/watch?v=5wS3AMbXRLs Forensic Recovery of Raw Disk: http://www.youtube.com/watch?v=kHdNoKIZUCw What Data Can You Steal From an iPhone in 2 Minutes? http://www.youtube.com/watch?v=34f47m-lYSg These YouTube videos demonsrate just how easy it is to bypass the passcode and backup encryption in an iPhone 3G[s] within only a couple of minutes’ time. A second video shows

Full Disclosure and Why Vendors Hate it

On May 1, 2008 by Jonathan ZdziarskiI recently did a talk at O’Reilly’s Ignite Boston party about the exciting iPhone forensics community emerging in law enforcement circles. With all of the excitement came shame, however; not for me, but for everyone in the audience who had bought an iPhone and put something otherwise embarrassing or private on it. Very few people, it seemed, were fully aware of just how much personal data the iPhone retains, in spite of the fact that Apple has known about it for quite some time. In spite of the impressive quantities of beer that get drunk at Tommy Doyle’s, I was surprised to find that many people were sober enough to turn their epiphany about privacy into a discussion about full disclosure. This has been a hot topic in the iPhone development community lately, and I have spent much time pleading with the different camps to return to embracing the practice of full disclosure.

Tales From the Apple Store

On March 25, 2008 by Jonathan ZdziarskiLast night marked a unique event in history. The Apple Store in Cambridge MA allowed me to come in through the front door and deliver a keynote to some 200+ people as they hosted the Mobile Monday Boston conference. In spite of the sheer chaos of fitting so many people into such a small store, and the generally poor acoustics of a mall, what the conference lacked in elegance was quickly made up for in quality of content.